Yomeroo

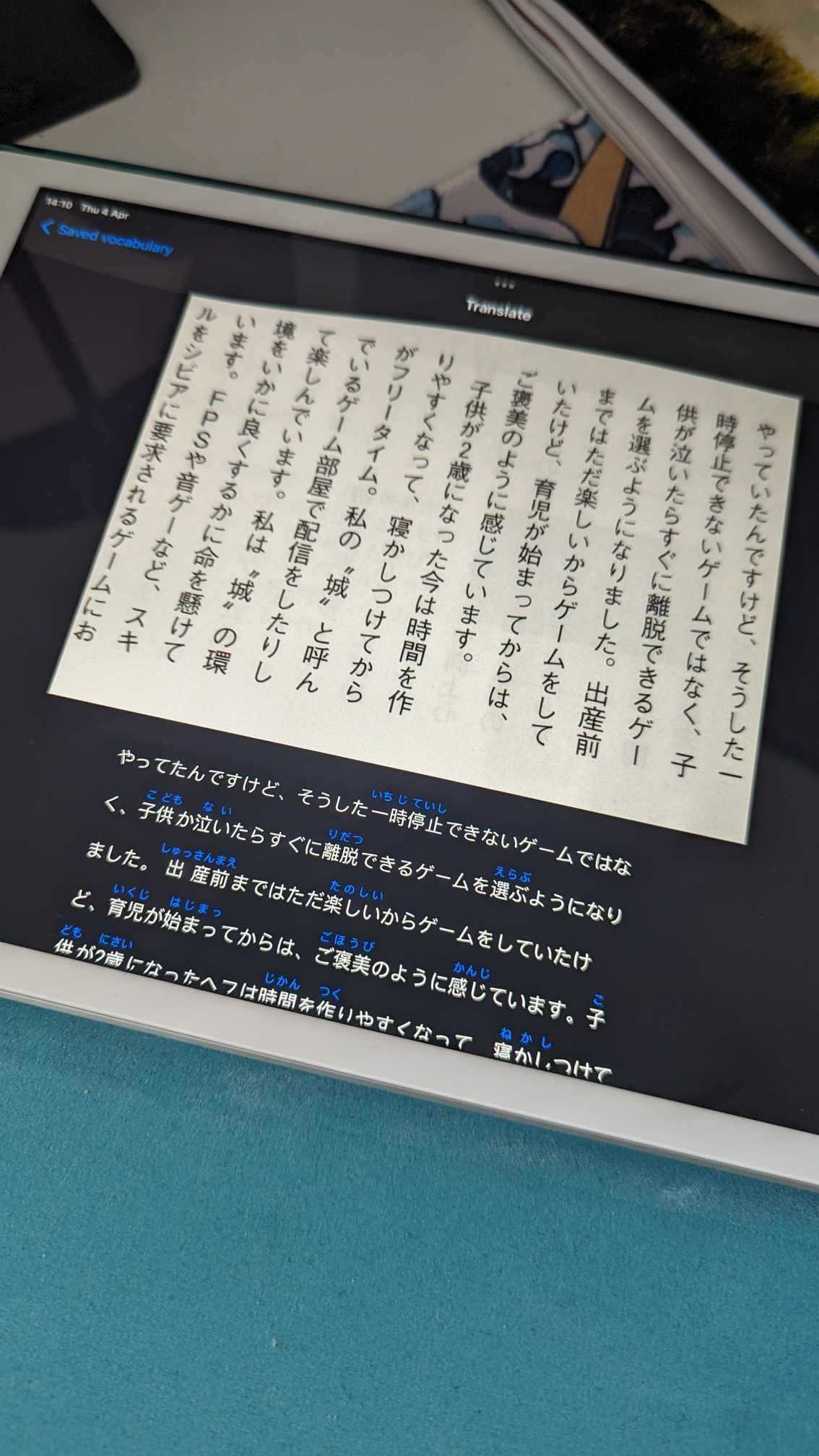

Yomeroo is a small side project developed with the goal of exploring SwiftUI and OCR technology. The app is meant to help with reading Japanese books by allowing the user to scan the native text in order to display its furigana reading, and get quick translations of unknown words.

The full list of feature requirements was as follows:

- Take a picture / select a picture of Japanese writing

- Implement OCR technology to read text from the images

- Display this generated text with furigana to make it easier to read

- Implement a translation feature for selected words

- Add option to save vocabulary for future study

- Support dark mode

Despite the app consisting of only two screens, there were quite a few complex issues to overcome. Coupled with the fact that I had very little experience in SwiftUI, this made for a very intense and fun project.

OCR

As this was a training project, I didn’t want to use expensive and complex platforms such as Google Vision or Azure’s offering for OCR. I instead opted to use ocr.space API which offers Japanese language support and a very generous free tier. The only problem I found with it, is that it only worked with vertical writing. As most of my needs are around reading books, I deemed it good enough, but this would be a huge let down for a production app. Otherwise the API was simple to use, and the only thing to look out for was making sure the image size didn’t exceed the free tier allowance.

Furigana

This was by far the most challenging part of this project, coupling linguistic and technical complexities into one head scratching mixture. What was the problem, exactly? First of all, furigana can be displayed using ruby annotations which are not supported in Swift UI. I needed to dive into UIViewRepresentable, which is essentially a wrapper used to integrate UIKit views into SwiftUI app. After I managed to display those attributed strings with furigana on top, I encountered further issues around sizing (the text needed to resize dynamically after scanning a different photo), displaying correctly inside a scroll view (for some reason, this was a problem), and supporting text selection (ruby attributes were said to work better with UILabels, not UITextViews, but in order to support a selectable text for translation, I needed to use the UITextView). This was only the UI part of the work though.

Japanese is a very specific language using kanji which can have different readings depending on the context. That being said, it’s not a simple matter of mapping one reading to a kanji and displaying it on top. This is highly situational, and from what I gathered, there is simply no way to do this technologically with 100% correctness. As I really didn’t want to spend weeks developing my own custom solution using dictionary files, only to reach a mediocre solution, I decided to harness the power of AI. I introduced a ChatGPT service and created a prompt which allowed me to generate furigana readings for given text with quite a good accuracy.

Translation

Luckily for me, Japanese is quite a popular language to learn, so there are quite a few APIs and open source dictionary files available for use. For the POC I went with kanjiapi.dev which returns meanings, readings and other data about a kanji. It was extremely simple to use, without even a signup required, but this wouldn’t be good for actual use as it doesn’t support multi-kanji word translation. As I already have the service code, and translation sheet UI with selectable text done, swapping it out for a more powerful dictionary API wouldn’t be a problem, however.

SwiftUI

SwiftUI turned out to be a fun and easy to use framework, which I would love to explore in more detail. The code to take a picture/select picture from gallery was surprisingly simple and the community support/resources are plenty. Obviously, displaying the custom furigana view was challenging, but it was interesting to see, how UIKit views can be still used in the newer apps, something that would certainly come in handy when migrating legacy, highly customised products. Despite being super easy to use in most cases, I found it somewhat lacking around the default UI elements, cut as navigation bar. The designs I was using featured a custom shaped navbar background, which in turn made changing the text color of navbar title impossible. In the end I was forced to use some workarounds, but it is baffling, how something this simple remains nearly impossible. Still, it’s a solid tool, and the preview functionality is definitely a game changer.

Summary

I was really happy to see how much I could achieve in just a few evenings of coding. It was a fun and challenging project that had me learning new things every step of the way. After spending so much time working on big applications requiring regular maintenance, it was refreshing to start and finish something quickly, and purely for fun. Onto the next thing!